Artificial intelligence. Neural networks. Large language models. Agents. Terms swirling through every boardroom, conference call, and casual coffee-shop conversation these days. We are truly living in the Age of the Algorithm. And honestly? It's exciting. It's terrifying. There's genuine possibility here, but who own's it? Who will it benefit?

I want to be clear-eyed about something. Exciting and ready are not the same thing. We're steering a very fast vehicle into a fog bank without gauges. Uncertainty can be a useful fuel for change, but there still needs to be a capable, trustworthy driver at the wheel. The question we should be asking is not whether AI is impressive. It clearly is. But is it actually improving things for everyone, or simply enhancing an already exclusionary world?

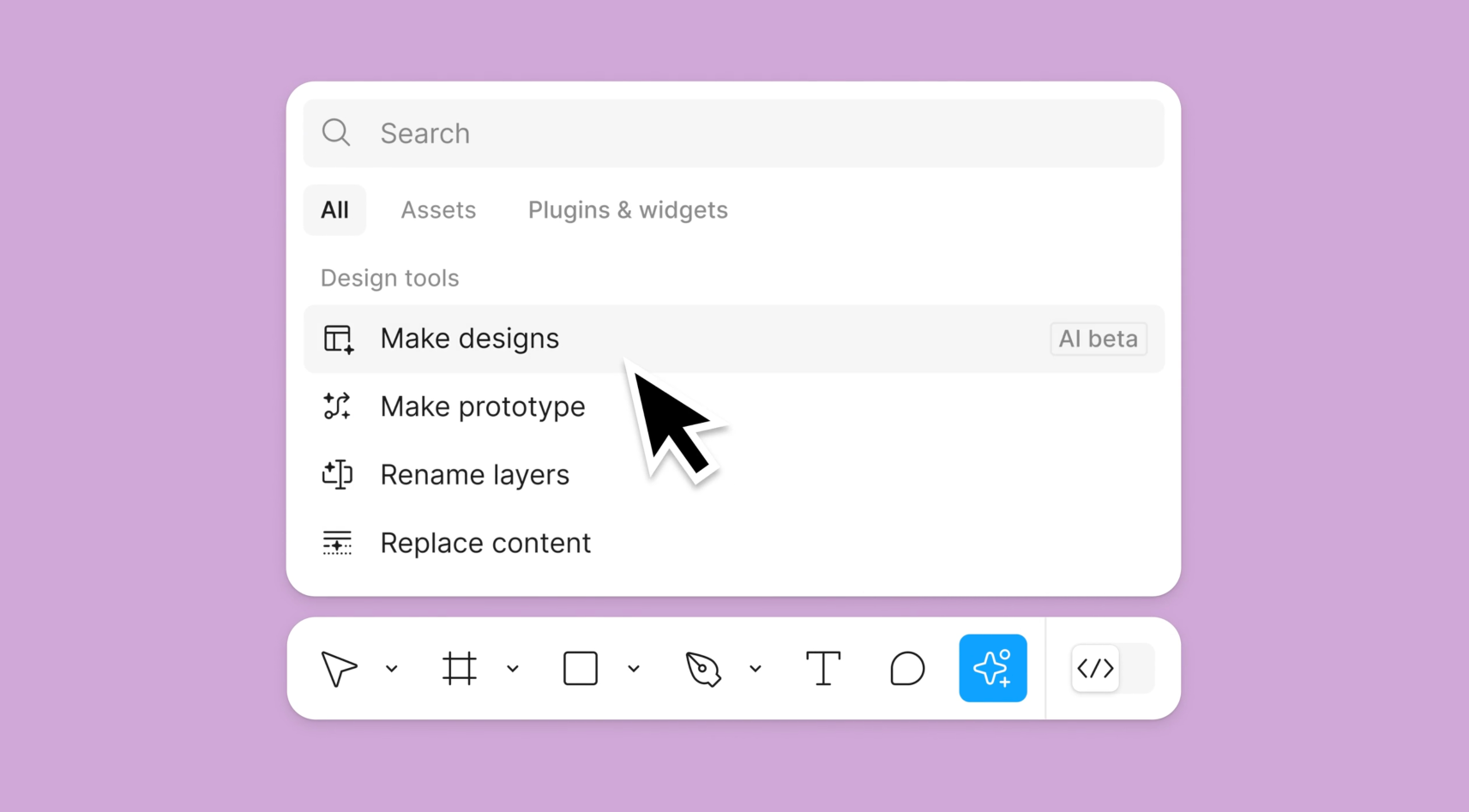

Last week, Figma introduced a suite of AI-powered features, including one called Make Designs, which generated no small amount of spirited debate in the design community. The premise is straightforward. Rather than manually building interface designs from scratch, designers should just let Figma generate them using AI. On the surface, this sounds like a dream come true. After all, who among us wants to spend all day nudging pixels by single-digit increments?

The deeper concern, though, isn't about pixel-pushing. It's about what happens when business stakeholders who are already laser-focused on efficiency and cost, see AI producing decent-enough looking interfaces in seconds. The mental math isn't hard to follow, and it's keeping a lot of designers up at night. It's more than just a new feature. It's a signal. A taste of things to come.

When efficiency becomes the only metric that matters, something important gets left behind. The temptation to treat design as a line item to be optimized away, rather than a discipline that creates meaningful human experiences, takes over.

Everyone's Responsibility Top

AI systems learn from data. Vast oceans of it, scraped from the internet and derived from the creative work of countless (unpaid) people. LLMs are sophisticated pattern-matchers. Impressive ones, to be sure. But they cannot empathize. They don't rationalize. They reflect back what they're fed, and what they're fed is a dataset full of centuries of blind spots and marginal thinking.

Now, human designers aren't exactly pure vessels of unbiased wisdom, either. We're all shaped by our experiences, our cultures, our educations. There is real truth in that. But here is the critical difference. We can empathize and rationalize. We can sit across from someone whose experience of the world is fundamentally different from ours, feel the gaps in our understanding, and make conscious decisions to do better. We can confront our assumptions and redesign not just the product, but our own thinking.

That is the soul of inclusive design. And it's something a model trained on existing data cannot do. Not without deliberate human intervention.

Here's some facts. Approximately 13% of the global population lives with some form of disability. Yet research suggests that only around 3% of websites are meaningfully accessible to them. When you train AI on that same internet, you teach it to reproduce the same exclusions at scale.

The people building AI systems are not, as a general rule, the same people navigating the world with a screen reader, or trying to complete a form with limited motor control, or processing dense content through the lens of dyslexia. The data for those people is incomplete. The assumptions are inherited. And without designers actively asking hard questions like, "Who is this failing?" and "Who did we forget?" the output will never be inclusive. It will be a polished simulation of inclusion.

Accessibility isn't a checklist item or a plugin setting. It's a mindset. A professional commitment. A moral stance. And you can't automate a moral stance. Not without including the people who live it in the conversation.

We can't create equitable solutions from inequitable data. Training a model on a broken system and expecting it to produce inclusive outcomes is, to put it charitably, optimistic to the point of delusion. Until we address the source, the raw material of the web, progress will stagnate. It'll be the same old patterns, just faster and scaled up.

Poorly Performing Prompt Products Top

Here's another concern worth naming plainly. What happens to the next generation of designers in a world where AI can generate "good enough" at speed?

Senior designers will likely weather this transition reasonably well, at least for a while. Their years of experience navigating complex stakeholder dynamics, advocating for accessibility in tight-timeline environments, and translating messy human needs into coherent product decisions are genuinely valuable. That kind of institutional wisdom doesn't become obsolete overnight.

Junior designers, however, face a more precarious situation. When a product manager can generate passable mockups before lunch with a well-crafted prompt, the business case for hiring and mentoring early-career talent becomes harder to justify. And when there's no one asking inconvenient questions, the quality of what gets built quietly erodes.

The risk is a talent ecosystem that hollows out from the bottom. Fewer people entering the profession means fewer people developing the expertise that makes design meaningful. Fewer voices challenging assumptions means fewer products that work for marginalized groups. It's a slow-moving problem, but it's real.

The Light At The End Top

Here is the good news. AI tools, used thoughtfully, can make designers better at their jobs. Not by replacing design thinking, but by clearing away the work that gets in the way of it.

Think about the kinds of tasks that consume hours without adding creative value. Cleaning up inconsistent layer-naming conventions, swapping placeholder colors for properly tokenized variables, converting raw research notes into structured journey maps, replacing ad hoc components with library-standard equivalents. These are important tasks, but they aren't the heart of the work.

When AI handles the operational overhead, designers can redirect their energy toward the things that actually require human judgment. Some examples of where AI assistance genuinely helps:

- Optimizing component structures to reduce unnecessary complexity and layer bloat

- Translating user research notes into visual artifacts like journey maps or affinity diagrams

- Enforcing design system consistency by replacing one-off elements with shared components

- Generating structured documentation for component properties and usage guidelines

- Standardizing naming conventions across large, complex design files

These aren't trivial contributions. Time reclaimed from tedium is time available for craft. That's a genuine value proposition, and it is worth embracing with both hands.

Liberation Or Exploitation: Choose Your Own Adventure Top

There was a vision, not so long ago, of what technology-assisted work might look like. In this vision, machines handled the repetitive and the rote, freeing human beings to do more of what makes us distinctly human. Create, connect, care, think laterally, solve problems that do not have obvious answers. It was a compelling pitch, and it still is.

The question is whether we are building toward that vision or away from it. Right now, in many organizations, AI is being deployed not to liberate workers but to extract more output from fewer of them. The efficiency gains flow upward. The burden flows downward. That is not an AI problem, exactly. It is an incentives problem. A fundamentally human problem that AI is making easier to execute at scale.

Here's what I know. AI is not ready to replace designers. Not because the technology is unimpressive, but because design is not primarily about generating visual outputs. It's about understanding people, including people whose experiences differ dramatically from the mainstream. It's about wondering who's missing from the conversation and then doing the work to bring them in.

So here's the practical challenge. Use the tools. Use them enthusiastically. Automate the repetitive work, accelerate the mundane, and reclaim the hours that administrative overhead has been quietly stealing. But don't mistake the tool for the work. Don't let the speed of AI-generated output become an excuse to skip the harder, slower, more human work of building things that actually work for people.

The soul of good design is empathy. The commitment to inclusion is a professional and ethical obligation, not a feature request. And defending that commitment, especially when inconvenient, is worth every bit of the effort it requires.